Has Forecast by Mark Buchanan been sitting on your reading list? Pick up the key ideas in the book with this quick summary.

Since the 2008 financial crisis, the question of how we might better predict such a catastrophe in the future has been at the forefront of economists’ minds. Six years later, we’ve never felt a greater need for a new model of economics.

As Forecast shows, basic assumptions made by economists are so divorced from the economic reality that they almost invalidate the contemporary economic models.

By explaining the flaws in our current economic models and thinking, physicist Mark Buchanan lays the groundwork for a new economics, in which we learn to think of, and analyze, the economy much in the same way meteorologists predict the weather.

Using his knowledge of other fields of research, like biology, physics, psychology or meteorology, Buchanan derives insights that, he argues, could help us to advance the scientific study of the economy.

In this summary of Forecast by Mark Buchanan, you’ll learn:

- how economics began and what the invisible hand refers to;

- about limitations and flaws in current economics;

- why new financial phenomena, like High Frequency Trading and Leveraging, make economies both more efficient and less stable; and

- why economic forecasts should be more similar to weather forecasts.

Forecast Key Idea #1: The concept of equilibrium helped to explain early discoveries in economics.

As any economist worth her salt will know, Adam Smith played a major role in developing economics – the scientific study of the economy in the eighteenth century.

In 1776, Smith wrote the influential The Wealth of Nations, in which he explained how the division of labor could increase a company’s productivity.

Let’s say a company produces pins. If every employee were responsible for just one step in the production chain – for example, one person to cast the metal, and another to smelt it – the company would be far more productive.

Smith also introduced another major concept: the invisible hand. According to his theory, if everyone pursued his or her selfish interests, eventually this would lead to beneficial outcomes for our society.

If we apply this concept to the pin company example, if the company introduces a division of labor into its production process, the result is that it can offer its pins at a cheaper price, hence benefiting itself (more sales) and society (cheaper prices).

In order to give these early discoveries scientific credibility, economists adopted the concept of equilibrium. They invoked the Greek physicist Archimedes, who used equilibrium to explain the workings of levers and other simple mechanisms. Equilibrium, for Archimedes, was achieved when both arms of a lever bore an equal weight at an equal distance.

Early economists also used Isaac Newton’s theory of gravity to lend credibility to the equilibrium concept. With his theory, Newton claimed that unbalanced forces affect every object, essentially accelerating it until it reaches a state of equilibrium. According to Newton, an apple falling from a tree reaches its equilibrium when it hits the ground.

It was in such august company as Archimedes and Newton that, in 1874, economist Léon Walras adopted the concept of equilibrium in order to explain Smith’s theory of the invisible hand. Using mathematics, Walras demonstrated the way in which both supply and demand eventually come to a state of equilibrium, where the total demand is satisfied by a sufficient supply.

As we’ll see in the following book summarys, the concept of equilibrium still plays a major role in modern economics.

Forecast Key Idea #2: Markets in equilibrium are efficient.

We say that a market is in equilibrium when it contains many suppliers, and product prices decrease until they hit an optimal price point for both buyers and sellers. Thus, as they satisfy both buyer and seller, markets in equilibrium are optimal – or, in other words, they’re efficient.

In 1954, economists were able to show that for every saleable good, there is a specific price at which supply equals demand.

Let’s say that a supermarket sells 100 apples at a price of $1 per apple, and that there are 80 people willing to pay that price.

In this scenario, there are 20 apples unsold. However, if the supermarket offers the apples at a price of 90 cents, it will sell all 100 apples. This is an example of economic equilibrium.

But that wasn’t all that economists were able to demonstrate. They could also show that the resulting equilibrium of supply and demand is pareto optimal. This means that the equilibrium represents the best overall outcome for society; i.e., the price or quantity of a good can’t be changed without making someone in society worse off.

If we consider the above example, decreasing the prices of apples would likely lower the supermarket’s revenue. On the other hand, increasing the prices would make the apples too expensive for some households.

In financial markets, the concept of efficiency refers to the rational use of information.

In 1970, economist Eugene Fama developed the Efficient Market Hypothesis (EMH). According to this hypothesis, financial markets are efficient because all new information is immediately reflected in the value of financial goods, much like stocks.

If Apple presents a new, exciting product, investors capitalize on that information and buy shares in the company, which drives up the value of its stock.

The rational use of information causes prices to fluctuate in random, unpredictable patterns, because new information continues to appear unexpectedly.

Markets are considered efficient because they use resources in an efficient way and produce optimal outcomes for society. But is the EMH realistic? Do investors use information in the most efficient way?

Forecast Key Idea #3: Price changes in the financial markets aren’t caused solely by information.

In a 2009 study, economists wanted to find empirical evidence for the EMH. They analyzed how information affected the price level of more than 900 stocks over a period of two years.

One key statistic they were investigating was the volatility of prices. Volatility describes the fluctuation in prices within a given period. If the price doesn’t change much, volatility is low. If it changes a lot, it’s high.

They also investigated the relation between, on the one hand, the volatility of the observed stock prices, and on the other, information, such as news announced in publications like The New York Times.

If the EMH were true, the volatility of stock prices would rise immediately after important news announcements, and stocks would stabilize when they reach a price that reflects its new value. In contrast, if there is no news coming up, the prices should remain fairly stable.

What did their investigation reveal?

In fact, the results of the study contradicted the EMH.

In their examination of stock volatility, they discovered that a change in price levels stabilizes much more quickly if it occurs following some news, than if it happens after no news at all.

Why? Basic human nature.

When we don’t know why something is happening, we panic. If people can’t observe an obvious cause for the fluctuation of their stock price, they lose their nerve and sell.

In contrast, when there’s a clear, observable cause for price fluctuation – say a company announces liquidity problems – the stock prices will drop as well. The defining difference here is that investors know the reason for the drop in price – they have all the information they need. The result is that they don’t become as nervous, allowing prices to stabilize more rapidly.

The EMH claims that price changes of financial goods are caused solely by information. In reality, however, that’s not the case. And even if it were, do people actually use information in the optimal way all the time?

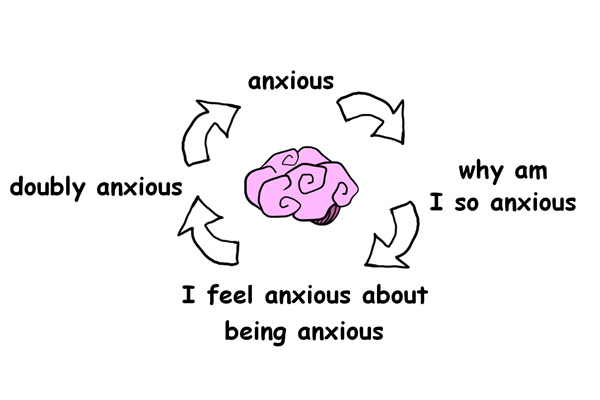

Forecast Key Idea #4: Even if we’re capable of being rational, we certainly don’t behave rationally all the time.

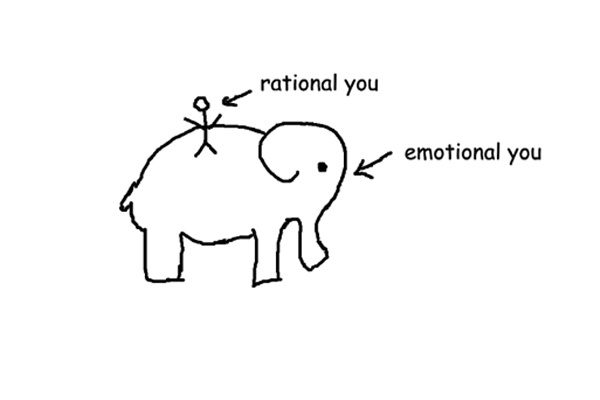

At the basis of the EMH is the assumption that people are consistently rational. However, this is far from the truth.

Richard Thaler, an economist from the University of Chicago, placed an ad in the Financial Times, inviting people to play a simple game. Participants had to choose a number between 0 and 100. The winner? The one who chooses the number closest to two thirds of the average of everyone else’s choice.

What would be the best choice? It is 0.

If all guesses average to 50, then 33 is actually the best guess. Thus, a lot of people might decide to choose 33 instead of 50. However, that will make 22 the best guess. So, if you carry this through to its logical end, you’ll eventually see that 0 is the optimal answer.

If we lived in a world where people consistently used information rationally and optimally, everybody would choose 0. Of course, in Thaler’s game, that didn’t happen. The average of all guesses was 18.9, with the winning entry being 13.

In fact, it’s questionable whether absolute rationality can exist at all. When we carry a supposedly “rational” process through to its logical conclusion, we observe an absurd chain of events that reveals rationality to be an illusion.

Let’s say that the price of a stock you’ve invested in is falling rapidly, and you’re therefore thinking of selling it. But before making a decision, you have to carry out research, gathering information, so that you’ll ultimately be able to take the best course of action: to sell up or hold onto your stock.

If you were a fully rational creature, you’d spend the optimal amount of time on research and then make a decision. But how can you possibly know what that amount of time is? To determine it, you’d have to decide how much time to spend on thinking about the optimal amount of time for research – which presents the problem of how much time you should take for that step.

As we’ve seen, economic models assume people to be completely rational. However, people don’t behave rationally all the time and it is even debatable whether pure rationality exists.

Forecast Key Idea #5: A financial market can be highly efficient and highly unstable at the same time.

In 2007, the world witnessed the beginning of a major banking crisis, which destroyed a great deal of money. Even though there are countless expert economists out there trying to make sense of our economy, almost none of them anticipated the severity of this crisis.

In an effort to ensure that we’ll never be as blind to such possible outcomes in the future, and thus to help us prevent similar disasters from reoccurring, economists are constantly testing their knowledge of the market.

In one example of this, researchers created a virtual model of the economy.

First, some hedge funds (organizations which invest money) were created to invest in stocks. In addition, these funds competed for investors who would invest in the fund that promised the largest return.

Second, these hedge funds could borrow money from banks in order to increase their returns. This tactic is leveraging – when you invest with borrowed money in addition to your own.

Third, these banks restricted the leveraging of hedge funds. To do so, they used a ratio that helped them to determine how much money hedge funds could borrow by looking at how much money the funds already have.

If the ratio is 15, a fund could borrow 15 times as much money as it currently has and invest that.

In this simulation, leveraging increases the efficiency of the market. It enables hedge funds to borrow a lot of money that they can then invest, and because of this the stock prices stayed close to their realistic value.

The problem is that leveraging also makes markets less stable. In studying their virtual model, the researchers observed extreme events happening more frequently with “high leveraging.”

If a stock price drops, it essentially reduces the money that a fund possesses. This forces hedge funds to sell off some of their stocks, thus driving the price of these stocks down also. In turn, this price drop might force other funds to sell their stocks, resulting in a selling race that can destroy a lot of wealth.

In conclusion, leveraging makes markets more efficient but less stable. And markets that are unstable cannot be described as being in equilibrium.

Forecast Key Idea #6: Technological advances have made trading cheaper, but they also decrease the stability of financial markets.

Today, computer algorithms play a major role in our culture. They’re hard at work gathering data while we fritter away time on Facebook, and they even play Cupid for many people looking for their perfect match.

It’s unsurprising, then, that algorithms are an integral part of contemporary stock trading. Designed to buy and sell mispriced stocks, such algorithms analyze market data and, on the basis of the results, decide whether or not to sell. This enables traders to turn a large profit by trading massive volumes of that stock.

This practice is known as High Frequency Trading (HFT) and it makes stock trading much cheaper.

And though HFT is quite rare, it has a great impact on the economy. In 2010, for instance, even though less than two percent of firms that were actively trading were engaged in HFT, it nevertheless accounted for 73 percent of the total volume traded. And because the efficiency of the algorithms used massively increases the demand for stock trading, HFT actually drives down market prices.

But there’s one problem: HFT decreases the stability of financial markets. For instance, in May 2010, US stock markets experienced what is now known as a flash crash.

HFT algorithms sometimes have an emergency system that automatically sells stocks when a stock price drops below a certain threshold. This happened on a large scale in 2010, when the Dow Jones Industrial Average index dropped 9 percent within 10 minutes.

Some stocks lost their value, causing the emergency system of some traders to kick in and automatically sell stocks. This resulted in a chain reaction in which more and more emergency systems kicked in, automatically selling a tremendous amount of stocks.

Although the stock markets quickly regained their value and were back to normal 30 minutes later, the damage was done and many investors lost a lot of money.

Much like leveraging, HFT is an example of an instrument that, on the one hand, helps people to drive down stock prices, and on the other, places market stability at risk.

These examples reveal serious flaws in conventional economic thinking. So, how could we improve it? One way is to look towards scientific understanding in other fields, to see what it might teach us about our economy.

Forecast Key Idea #7: Earthquakes and market crises share surprising similarities.

What do major earthquakes and market crises have in common? They’re both extremely difficult to predict.

The basic mechanism behind earthquakes is pretty straightforward. While two tectonic plates slide together, one plate gets forced under the other. The resulting friction causes the plates to stick together and store a massive amount of energy that is released when the plates finally slip: the earthquake.

And yet, as straightforward as that may seem, no one was able to predict the 2011 Tohoku earthquake in terms of its timing, location and magnitude.

The same is often true of economic crises: even though we’re able to understand why and how it happened, only a handful of people predicted the recent global economic crisis which began in 2008.

But the similarities don’t end there.

Just like extreme earthquakes, devastating market crises occur less often than moderate ones.

Throughout history you can find extreme economic crises occurring with regularity, but less frequently than minor crises. And strong earthquakes happen less often than weak ones.

Moreover, even the aftershocks of earthquakes and stock crashes are similar.

Following an extreme market event, such as the global crash of 1987, the probability of big market movements decreases over time. For instance, the stock prices fluctuated greatly immediately following the crash, but these big fluctuations occurred less often as time passed.

A very similar thing happens after major earthquakes: the likelihood of the occurrence of an aftershock is higher immediately following the quake, and decreases proportionally over time. For example, on the first day after an earthquake the chance of an aftershock is 50 percent. By day 10, however, it’s as low as 10 percent.

Although they’re different phenomena, earthquakes and economic crises share astonishing similarities – so many, in fact, that economists might benefit from studying research in the field of earthquakes to apply to their own field.

Forecast Key Idea #8: Traditional economic thinking is misguided as it fails to consider that people live by irrational theories.

What’s the best time to go to a bar? When it’s quiet enough to get a seat? Or when there’s enough of a crowd to create a good atmosphere?

Rather than making rational decisions, people tend to rely on theories and strategies, a phenomenon that was illustrated by a puzzle created by Stanford economist Brian Arthur.

Imagine a bar that would be very popular with students. The bar, however, is very small, so if more than 60 percent of the students go, it will become overcrowded. In this scenario, it would be better to stay at home or to find some other form of entertainment for the night.

Let’s now assume that none of the students can discuss their decision with each other beforehand, and that everybody has to decide simultaneously whether or not to go to the bar.

In order to decide, students would likely come up with a theory or two, like, “If it was crowded last week, a lot of students won’t go this week.“ Or, “If it was crowded two weeks in a row, it’s likely to be crowded again this week.”

After running a virtual simulation of students using various strategies to make a decision, Arthur noticed something interesting: the weekly attendance quickly averaged at about 60 percent, but it was never stable. Rather, it always fluctuated around this number.

This demonstrates that a situation can be highly static – every week, students have to make the same decision – and still not arrive at a state of equilibrium.

Arthur’s discovery can help us to make economics more realistic.

Compared to the equilibrium theory, in which supply and demand will over time become equal and create a stable equilibrium, Arthur’s bar example represents a more realistic model of the economic world: There’s no such thing as a stable equilibrium, but prices will fluctuate unpredictably around a certain number, sometimes even resulting in rallies and crashes.

Forecast Key Idea #9: Social influence affects our decision-making and thus should be incorporated into economic models.

Peer pressure: we’ve all experienced it. Whether we like it or not, as social creatures, we tend to conform to the influences of those around us.

In 1951, US psychologist Solomon Asch ran an experiment. He asked people to say which of three lines of different lengths matched the length of another line. The lengths of the three lines differed so much that the correct answer should’ve been obvious.

But when the participants overheard the same wrong answer being given repeatedly, many of them also gave the same wrong answer.

This process of conforming is an automatic and unconscious one.

But social influence doesn’t merely cause us to conform in our behavior. Surprisingly, it also involves a shift in our perception.

In one experiment, researchers investigated what happens in people’s brains when they’re confronted with other people giving the same wrong answer to a question, similar to the Asch experiment mentioned above.

The researchers found that the social influence of other people shifted people’s perception: rather than merely conforming to a social “norm,” the participants actually saw what the other people saw. There was activity in the brain region associated with sight, and no activity in the brain region associated with decision-making (which would have to be the case if they’d consciously decided to conform).

Since social influence plays an important role in decision-making, it should be incorporated into economic theory.

Scientists could run economic simulations, similar to the leveraging simulation mentioned in the previous book summary, in which they investigated how leveraging made the market more efficient but less stable. They could include social influence as a factor in these simulations: What happens if player A influences player B? What if it’s the other way around?

Since social influence so strongly affects our decision-making and behavior, it should be integrated into economic models.

Forecast Key Idea #10: Like weather forecasts, economic forecasts could be made on the basis of simulations.

If we’re able to predict the weather, could it be possible to do the same for the economy?

Thanks to modern computers and simulation algorithms, it’s possible to forecast the weather with a high degree of accuracy. Take the two supercomputers at the heart of Reading’s European Center for Medium Range Weather Forecasts. These computers analyze a virtual atmosphere: by taking different meteorological aspects into account, such as air pressure, they simulate possible scenarios.

By simulating and analyzing all kinds of possible scenarios, these supercomputers can forecast wind, temperature and humidity at more than 20 million points from the earth’s surface.

The global economy is far more complex than the weather. Yet, because of the incredible power of modern computers and algorithms, it’s possible that we could use a similar approach for analyzing and forecasting the economy.

Researchers could collaborate and oversee massive simulations that replicate dynamic interactions among the world’s largest financial players.

Computers would calculate and test scenarios taking into account factors like leveraging, risk, density of interconnection, different financial transactions or social influence, in order to evaluate weak points or the resilience of a financial system.

But this would require the collection of tremendous amounts of data. How could we possibly source it?

Luckily, we’re now at a stage where we can install data-collection technology in many everyday objects.

Mass data collection may be most useful in analyzing how we interact. From our smartphones we can now track our daily lives: for example, we could link data of our location, browsing habits and communications to special patches, which, when attached to our body would record our hormone levels.

With this data, we’d be able to analyze all manner of human behaviors and learn about the force which directs the economy the most – us.

In Review: Forecast Book Summary

The key message in this book:

Modern economics is fundamentally flawed. It relies on assumptions that are anything but realistic, essentially diminishing the validity of its concepts and models. By looking at discoveries in other scientific fields, such as psychology or physics, economics might develop and thus, capture more precisely what is happening in our economy.

Actionable advice:

Look beyond the field of economics to find answers to the most pressing economic issues.

Researchers in the field of economics should take discoveries of important related fields into account in attempts to advance economic theory.

Suggested further reading: Economics: The User’s Guide by Ha-Joon Chang

Economics: The Users Guide lays out the foundational concepts of economics in an easily relatable and compelling way. Examining the history of economics as well as some critical changes to global economic institutions, this book will teach you everything you need to know about how economics works today.