Has Normal Accidents by Charles Perrow been sitting on your reading list? Pick up the key ideas in the book with this quick summary.

Flying in a plane is safe, right? Every day, thousands of aircrafts take off from runways and arrive safely at their destinations and nobody worries about them.

Decades of research into safety mechanisms, safeguards, and updated procedures mean that flying today is safer than it has ever been.

But air travel, like many of the potentially disastrous systems that are part of our social and economic fabric, is highly complex – when something does go wrong, it goes very wrong indeed.

Normal Accidents tells the story of these rare incidents and their impact.

First published in 1984, the book warned against the further adoption of nuclear power. Nuclear power plants, like flight, carry risks that are often mitigated by safety precautions, but can cause unparalleled damage thanks to something as trivial as a loose screw.

The author’s assertion that these accidents can and will happen despite our best efforts to contain them has been sadly vindicated by the 1986 Chernobyl and 2011 Fukushima nuclear disasters, as well as numerous major oil spills and two space shuttle tragedies.

In this book summary, you will discover how these highly complex systems work, and how certain systems are more prone to terrible catastrophe than others. In addition, you will learn to assess the inherent risks of systems, and end up asking yourself whether it might not be better to simply abandon some systems altogether!

In this summary of Normal Accidents by Charles Perrow, you’ll find out:

- how two ships about to safely pass each other on the Mississippi River ended up in a non-collision course collision,

- how “papering over the cracks” near the Grand Teton Dam cost eleven people their live, and

- how three astronauts narrowly avoided suffocating to death in space.

Normal Accidents Key Idea #1: Some disasters are both inevitable and unpredictable.

Imagine you have an important job interview. Preoccupied, you step out the door without your keys, and the door locks behind you. That’s OK – you keep a door key hidden nearby.

Wait, you forgot – your friend has it, and he lives across town. So you ask your neighbor for a lift, but his car won’t start.

You rush to the bus stop only to discover that there’s a bus drivers’ strike, and all the taxis are occupied. Disparaged, you cancel the interview.

Sometimes it just works out that way: rare sequences of events thwart precautions, such as safety devices, workarounds and backups.

For example, your hidden key was a backup, but failed because you changed your plan and gave it to a friend. The bus system wasn’t a workaround because its drivers were on strike, and the resulting lack of cabs show how dependent, or coupled, the two transport systems were to one another.

None of these errors would be a problem on their own. However, when they all interact with each other unexpectedly, they cause the entire system – and in this case your job interview – to fail.

System accidents, where multiple failures result in disaster, are often so complicated that we can neither foresee nor comprehend them in real time.

System accidents arise from a range of errors: in Design, Equipment, Procedure, Operator, Supplies and an unpredictable Environment – or DEPOSE, for short.

The door-locking mechanism and lack of emergency taxi capacity, for example, were design errors. When the neighbor’s car failed to start, it was an equipment error. Not allowing extra time for delays on the way to your interview was a failure in procedure.

The bus strike and the taxi shortage were failures in the environment. And finally, absent-mindedly leaving the house without your keys was an operator error.

Of course, a system accident in which you miss an interview isn’t the end of the world. But what happens when one occurs at something like a nuclear or chemical plant?

Normal Accidents Key Idea #2: A system may be simple to understand, but that doesn’t make it dependable.

Why is it that some complex organizations or technologies succeed, while simpler ones fail spectacularly at the first hurdle? To answer this, we’ll need to take a look at the characteristics of systems.

Systems can be categorized both by their complexity, and the flexibility of their processes.

Some involve complex interactions, which are difficult or even impossible to predict. Complex systems involve interactions consisting of close, interconnected components, and thus provide only indirect or ambiguous information to system operators like pilots or power plant technicians.

Linear interactions, on the other hand, are easy to predict. Here, the components are segregated, and therefore provide direct information.

Systems can also be tightly coupled, so processes have invariable sequences and delays aren’t possible. They contrast with loosely coupled systems, where processes are flexible and allow for delays.

A system’s specific combination of complexity and coupling will affect its functionality.

Universities, for example, are complex systems. They’re massive organizations with multiple interacting components that aren’t easily predictable. However, they’re also loosely coupled. If a professor falls ill and can’t teach, substitutes can be found without the entire system grinding to a halt.

Dams, on the other hand, are linear systems with tight coupling, making workarounds and recoveries less reliable. Being linear, they aren’t subject to unexpected interactions and are therefore free of system accidents.

However, the fact that they are tightly coupled means that a single component failure often can’t be remedied or isolated, which then triggers failure in other components, and on rare occasions leads to catastrophe.

And that’s exactly what happened in 1976, when the Grand Teton Dam in Idaho collapsed, killing eleven people and destroying $1 billion in property.

Prior to the disaster, geologists had found cracks in the surrounding rocks, which they knew even a small leak could exacerbate. So they filled the cracks with cement. However, their solution couldn’t handle the high pressure in the dam, which in turn led to the leak that caused the disaster.

Normal Accidents Key Idea #3: The Three Mile Island nuclear accident showed the precariousness of high-risk industries.

So what do system accidents actually look like? In 1979, the core at the Three Mile Island (TMI) nuclear power plant partially melted and came close to releasing massive amounts of radioactive material into the environment.

In a complex and tightly coupled system like TMI, where interactions are often unpredictable yet also unable to handle delays, trivial and unrelated failures can interact to cause a system accident.

At TMI, four small, separate failures caused the accident. What’s worse, they all occurred within 13 seconds of each other, so operators had no opportunity to react or even acknowledge them.

The first failures occurred when water leaking from a seal caused moisture to enter the air system. A false signal told the water pumps to stop, which, as a precaution, shut down the turbine used to generate power.

The second failure involved the emergency water pumps, which were inoperable due to some closed valves. As a result, the core’s temperature and pressure rose.

Luckily, TMI was outfitted with safety devices. However, as in all system accidents, these are sometimes ineffective or can even damage recovery.

For example, when an automatic safety device (ASD) opened to relieve the pressure, it got stuck – the third failure.

The ASD signalled that it had shut, but had actually remained open – the fourth failure.

Faults in the coolant system that are still not understood disguised the destabilization of the core, and led to the overheating of the coolants themselves.

The operators followed safety procedures and reduced the cold water feed, but there was still a major problem: due to a lack of heat removal, the core was uncovered and a hydrogen bubble had formed.

Two hours later, operators finally identified the malfunctioning ASD and closed it manually, and were then able to cool down the reactor and bring everything back under control.

TMI could easily have gone the other way, and the accident offers us a valuable lesson: complexity and coupling are inherent in our riskiest industries.

Normal Accidents Key Idea #4: The safety of some complex and tightly coupled systems, like air travel, improve over time, but disasters still happen.

You often hear that it’s safer to fly than to drive a car. And truly, flying is relatively safe.

Over the years, repeated flight trials and experiences with error management have all inspired better designs and safety measures, which have in turn resulted in fewer and fewer crashes per number of flights.

A reduction in complexity and coupling has also greatly decreased the risks in flying. For example, today’s jet engine design is even less complex than the piston engine!

Moreover, the air traffic control system has evolved to be less tightly coupled, by, for example, restricting access for different types of aircraft to different areas. As a result, there are almost no mid-air collisions.

Nevertheless, despite these improvements in safety, the inherent complexity and tight coupling of flying means there will always be crashes.

For example, while jetliners offer the safest mode of flying, the DC-10 (a once popular airliner produced in the 1980s) has a history of catastrophic accidents.

However, rather than system accidents, most of these were component failure accidents, meaning they had a single cause that resulted in other failures.

For instance, in 1979 at Chicago O’Hare, one of three engines of a DC-10 was hastily serviced before takeoff. To the horror of the passengers, that engine tore off mid-flight.

While the plane could still fly with the other two engines, three resulting failures – the retraction of the left wing’s leading edge slats, loss of the slat disagreement warning system and loss of the stall warning system – caused the crew to lose control of the plane, resulting in 273 deaths.

In spite of the great improvements in air traffic safety, a simple maintenance error, combined with design errors in the slats, led to hundreds of deaths. Flying, with its multitude of components, unpredictable interactions, and pressure to maintain a tight schedule, will never be risk-free.

Normal Accidents Key Idea #5: Petrochemical plants suffer from system accidents, but rarely from unpreventable catastrophes.

The dangers of nuclear power are well known, but the risks involved in petrochemical plants pass largely under our radar. However, they are considerable, and we should be informed.

The petrochemical industry – like the nuclear business – employs transformative processes, whereby new products are created from raw materials that are often toxic or explosive. In spite of this, the chemical industry has been in business for over a hundred years and has suffered few catastrophic accidents. There have been relatively few unpreventable system accidents resulting in high casualty figures, but they do indeed occur.

For example, at a chemical plant in Texas City in 1969, no one could have predicted the sequence of events that saw a leaky valve component fail and the subsequent interactions between temperatures, pressures and gases that combined to produce an enormous explosion.

Surprisingly, no one was killed, although some nearby houses were damaged.

However, while unpreventable system accidents have occurred, preventable accidents in petrochemical plants have resulted in many deaths.

For example, in 1984, a chemical plant in Bhopal, India released lethal gas into the environment, directly resulting in the deaths of 4,000 people in nearby shanty towns, and injuring 200,000 others.

But this was no system accident, but instead an accident waiting to happen. Due to financial difficulties, the company running the plant had laid off key staff (including maintenance workers), cut back on refrigeration devices and left safety devices in disrepair.

In fact, prior inspections had even identified poorly trained staff and leaky valves.

Worse: no alarms sounded. Authorities were not informed of the accident and even the medical officer misinformed the public and police, telling them that the gas was not dangerous.

Even after decades of complication-free production, it only takes the right mix of circumstances to cause a catastrophic system accident.

But how is it that system accidents occur in spite of safety precautions? Read on to find the answer!

Normal Accidents Key Idea #6: Sometimes safety devices and automation actually increase the likelihood of a system accident.

Have you ever been under so much pressure at work that, in a frantic haste to finish, you ended up making some little mistakes? Something similar happens in systems, too.

System accidents usually don’t happen because of production pressures, but these pressures do increase the likelihood of small errors, which can cascade into something uncontrollable.

What about the captain of a large BP oil tanker, who was told to deliver his cargo to a west Wales terminal before high tide made it impossible to unload for another five days.

To accomplish this, our faithful captain calculated that he needed to steer the tanker through the Scilly Isles in the Channel, a quicker – but more dangerous – route than he would have otherwise planned.

At full speed, the captain noticed that he’d overshot and tried to turn the tanker, but nothing happened! Only when it was too late did he realize he needed to switch the control of the wheel from automatic to manual. The tanker crashed. 100,000 tons of oil seeped out along the English and French coasts.

As this tragic event shows, technology designed to aid in safety and navigation, such as automatic steering, can actually increase the chances of accidents.

In fact, statistics showed that while it was increasingly rare for marine accidents to be caused by mechanical failures, overall accidents were actually increasing.

Most of these accidents were due to an operator’s haste or poor judgement. This happened despite the adoption of collision avoidance systems, which calculate ships’ trajectories and speeds, and aid communication between vessels.

Regrettably, it appears that companies use these technological advances to decrease operating expenses and demand quicker delivery in worse weather and through busy channels. Operators, in turn, take more risks to keep up.

Normal Accidents Key Idea #7: While the best designs may be flawed, and management impotent, recovery from failure is possible.

Although the Apollo 13 space mission was en route to a moon landing, the ship never touched its dusty surface. Instead, the crew almost suffocated in the vacuum of space.

Why?

Despite the contributions of countless experts and billions of dollars in funding, there were still design flaws. For example, one of two oxygen tanks on board had never been tested in space. In fact, a trial on Earth resulted in the craft’s insulation burning up due to wiring faults. This was never fixed.

In space, these wires created an electrical discharge, generating heat which blew off the cap of the oxygen tank and emptied it. Then the second tank started leaking.

Nothing on board notified the crew or mission control that one of the tanks had exploded. Even if it had, the warning lights themselves were misleading.

Moreover, the astronauts were only trained for failures of one or two components, and even then relied on safety devices and backups.

Only when one of the astronauts looked out the window and saw gas escaping from the tanks did they realize that there was a problem.

Despite all this, they recovered. How? By simplifying and loosening the system.

All three astronauts squeezed into the tiny landing module, which had been designed for two astronauts to travel the short distance to the moon from orbit. Water and power had to be carefully rationed.

Things had to get simpler: there would be no moon landing.

They conserved fuel by using the gravitational force of the moon’s orbit to sling-shot the craft back to Earth. Automated devices were discarded, and the astronauts took control of the craft. They even simplified the operating procedures for re-entry on the spot.

This simplification gave them extra time, and meant the system’s coupling became looser and less prone to total collapse.

Thankfully, they escaped what could have been certain death; their improvised “life raft” landed in the ocean and the astronauts were saved.

Normal Accidents Key Idea #8: Our intuitions can deceive us and cause accidents.

One day on the Mississippi River, two ships, the Pisces and the Trade Master, were on course to safely pass one another. Yet, at the last minute, they turned into each other’s paths and collided. How on Earth did this happen?

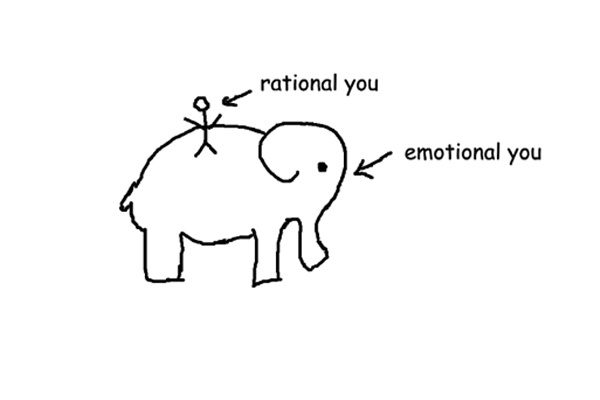

Partly it’s a matter of intuition. Since we cannot pore over all possible outcomes when making a decision, we base our judgements on hunches and rules of thumb, and while these intuitions are convenient, they’re also sometimes flat-out wrong.

In fact, some of our intuitions directly contradict what we know to be true. For example, if a coin has come up heads 20 times in a row, we intuit that it is far more likely to be tails next, ignoring the basic statistical properties of a coin flip: at each flip, the chance for tails is always 50 percent.

In fact, even highly experienced operators can construct an understanding of the world that is totally divorced from reality. That’s where the bizarre accidents called non-collision course collisions come in.

Back to our ships on the Mississippi: the captains agreed over the radio to pass starboard to starboard.

Before they reached one another, however, the Pisces thought that a tug boat was overtaking, and, believing that her current trajectory would lead to a collision between the tug and the Trade Master, turned sharply toward the shore and radioed the Trade Master to request a port-to-port side pass.

The Trade Master saw the maneuver, but did not hear the signal. She assumed the maneuver was a mistake that the Pisces would correct.

When that didn’t happen, the Trade Master also turned to the shore sharply, hoping to avoid the tug that was supposedly behind the Pisces, and push into the soft bank of the river.

All of these assumptions and intuitions about the other ship’s behavior caused their paths to intersect, and they collided, causing millions of dollars of damage.

As we have seen, certain systems contain amazing risks. These final book summarys will show you how to assess that risk, and what to do with that information.

Normal Accidents Key Idea #9: Risk assessment isn’t just about the numbers.

Do you know anyone who smokes cigarettes and at the same time condemns nuclear power? Though it might sound strange, such contradictions are natural, as we tend to minimize some dangers and maximize others.

For example, risk assessors, engineers and economists tend to employ precise and quantitative goals, facilitated by mathematical models. This absolute rationality ignores the social or cultural aspects of risk and safety.

Some have even quantified the value of a human life: $300,000.

These rationalists don’t see a difference between deaths that occur under different circumstances. To them, the 100,000 annual traffic-accident deaths are no different to the same number of deaths from a single catastrophe. Everything is just a statistic.

This kind of thinking isn’t merely bizarre, it also has broader social implications. Consider, for example, that the entire fuel cycle of coal, from mining to production, kills about 10,000 people per year, while nuclear power kills very few in comparison. An absolute rationalist would favor – and perhaps even advocate – nuclear power.

In contrast, most lay people have a misunderstanding of risk and safety, skewed by memories of catastrophes and fear of the future.

Since the public doesn’t possess the expert knowledge necessary to understand the technical reports of regulators, they instead place an emphasis on community spirit and family values. As a result, they are more concerned with uncertainties about new technologies and their impact on their children, also called dread risk.

For example, studies have shown that the public believes that nuclear power is riskier than hazards like cars, guns and alcohol. Experts in various fields, however, rated nuclear power as comparatively less risky.

Further questions revealed that the public focuses on rare disasters, rather than historical records, when assessing the risk of fatality. However, more dangerous yet more familiar activities, such as driving, were given relatively low risk ratings.

Normal Accidents Key Idea #10: We must reorganize some systems and abandon others altogether.

If we know that system accidents can be terribly disastrous, what can we do to balance their risks against the benefits that those systems bring to our societies?

One way to ease the risk of system accident is to centralize or decentralize the system, depending on its complexity and coupling.

As we’ve seen, linear systems with tight coupling, such as dams and marine transport, should be centralized. Here, failures occur in expected ways and require an immediate, precise response prescribed in advance by a central authority.

Complex systems with loose coupling, such as universities, should be decentralized. Here, failures may arise unexpectedly, but operators have the time to diagnose and analyze these unexpected situations.

Complex interactive and tightly coupled systems, like nuclear and chemical plants, aircraft, space missions and nuclear weapons, however, require both centralization and decentralization: centralization for an urgent, disciplined response, and decentralization to allow for a thorough search for the cause of the unexpected interactions.

Unfortunately, there’s no easy, cost-effective and safe way to do this.

Furthermore, depending on their complexity and coupling, certain technologies should be improved, restricted or abandoned altogether.

One way to figure out which solution is appropriate is to assess a technology’s catastrophic potential, referring to its capacity to affect both innocent bystanders and future generations, rather than those who knowingly take on the risk, like system operators or passengers on a plane.

For this reason, technologies with low to medium catastrophic potential – i.e., generally causing deaths only to operators, suppliers or users of the system – such as space missions, dams, chemical production or flying, continue to be used, although we must take steps to improve their safety.

Those with substantial catastrophic potential but a lack of viable alternatives, such as marine oil transport, should be restricted in order to avoid their worst possible outcomes.

Finally, we should abandon nuclear power and nuclear weapons. These pose the greatest danger to both innocent bystanders, and, potentially, the future of humans on Earth.

In Review: Normal Accidents Book Summary

The key message in this book:

Systems come in all shapes and sizes, and some are more at risk of major shutdowns and catastrophes than others. In fact, some are so complex and intricate that there is literally no way to stop a disaster once it has begun. To manage this, we need to decide which systems are worth keeping, and which should be abandoned altogether.

Suggested further reading: Brave New War

Technological advances like the internet have made it possible for groups of terrorists and criminals to continuously share, develop and improve their tactics. This results in ever-changing threats made all the more dangerous by the interconnected nature of the modern world, where we rely on vital systems, like electricity and communication networks, that can be easily knocked out. Brave New War explores these topics and gives recommendations for dealing with future threats.