Has Risk by Dan Gardner been sitting on your reading list? Pick up the key ideas in the book with this quick summary.

For years now, we’ve been piecing together a more complex picture of the brain and how it works. And psychologists, neuroscientists and other great thinkers have found that – when it comes to our emotions and decision-making processes – we’re not always thinking the way we think we are.

This book summary apply our generation’s advanced understanding of the brain to the concept of fear. This is especially pertinent today, when it seems like there’s a particularly large number of things to be afraid of.

But you’ll see that the human brain isn’t so good at judging fear. Once you know that – and the ways other people take advantage of it – you’ll realize the emotions you should feel in the twenty-first century are gratitude and relief. After all, we’re living in one of the least frightening times ever!

In this summary of Risk by Dan Gardner, you’ll find out

- how likely you are to die in a terrorist attack;

- how high dolphins can count; and

- how pharmaceutical companies get us to buy medicine we don’t need.

Risk Key Idea #1: Modern society is chock-full of false fears.

We’re constantly being told that the world is under threat, whether it’s from terrorism, climate change or global epidemics. It seems we live in dangerous times. But do we?

We currently live in a so-called risk society. Ulrich Beck coined the term in 1986 to describe societies in which there’s a high sensitivity to risk, whether it’s cancer or nuclear war. The United States and Europe are both good examples.

Beck noticed that risk societies were spreading throughout the world, especially as people grew more afraid of technological advancements.

As technology improved, our news outlets became filled with stories intended to scare us. In fact, a study conducted by Eurobarometer in 2006 found that 50 percent of Europeans believed their cell phones were a threat to their health. Meanwhile, stories of terrorism, cancer, obesity and gluten intolerance have taken over our media.

Most of these frightening stories are exaggerated, however. Frequently people don’t even understand the things they’re afraid of, like cancer.

In a 2007 Oxford study, researchers asked women at what age they thought they were most likely to develop breast cancer. Twenty percent said it was their fifties, and over half said that age wasn’t even a factor.

Only 0.7 percent knew the real answer: breast cancer is most common among women over the age of 80. Age is the single greatest determinant of breast cancer, not cell phones or anything else.

And the only thing that rivals many people’s fear of cancer is their fear of terrorism, despite the fact that, statistically speaking, it’s very unlikely that you’ll die in a terrorist attack. It would be much more logical to fear the flu: 36,000 Americans die every year due to flu-related complications.

Risk Key Idea #2: Our misconceptions about risk stem from the way our brains are hardwired.

Our brains are ancient, so when it comes to risk perception, it’s like using really outdated hardware to run the latest and most sophisticated software.

Human brains underwent a big change in the Stone Age. About 500,000 years ago, they grew from 650 cubic centimeters to 1,200 cubic centimeters. That’s only a slightly smaller than our current brain size of 1,400 cubic centimeters.

The final jump to 1,400 cubic centimeters occurred 200,000 years ago when the Homo sapiens species was born. DNA analysis has proved that every person alive today can be traced back to a single Homo sapiens ancestor from only 100,000 years ago.

But our brains haven’t changed a lot since then. Agriculture was developed 12,000 years ago and the first cities were built 4,600 years ago. Brain development didn’t progress nearly as fast as our environments did. The world has changed dramatically, but our brains have basically stayed the same.

Take the way we perceive snakes. Everyone is born with a fear of snakes. It’s hardwired into our brains because it helped our ancestors survive and pass on their genes. Even people from places with no snakes, such as the Arctic, have an innate fear of them. Car accidents are a much bigger threat to our safety, but we haven’t evolved to fear cars.

The “Law of Similarity” is another holdover from the past. By the Law of Similarity, humans believe that things are similar when they look similar.

That’s why the Zande people of North Central Africa thought chicken feces caused ringworm: they look the same. Another study found that people were reluctant to eat fudge when it was shaped to look like dog feces, even though they knew it was just fudge.

Risk Key Idea #3: There are two cognitive systems in our brain, and they process risk differently.

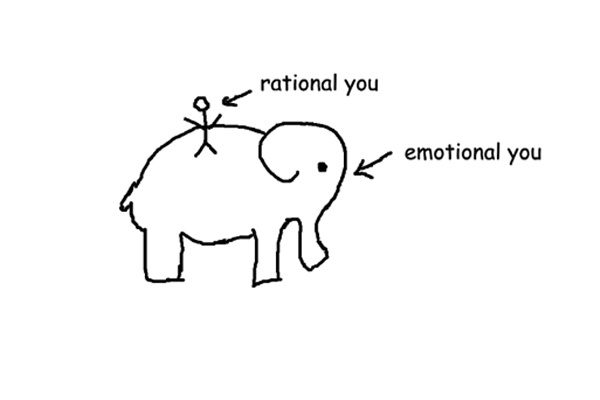

Our brains play some funny tricks on us. Daniel Kahneman won a Nobel Prize for illuminating one of them: we have two distinct brain systems that help us reason, and they produce different results!

The first is called System 1 or gut. It runs quickly and without your being conscious of it. You’re using System 1 when you intuitively feel something is right or wrong without knowing why.

System 1 operates based on a few simple rules. The Law of Similarity is part of System 1: if something looks like a lion, it’s probably a lion, and you should get away from it.

The problem is that System 1 is often inaccurate and doesn’t adapt well to new situations. Remember the snakes? System 1 tells you to jump in fear even if you see them in a movie and know they can’t cause you any harm.

The strength of System 1 can be illustrated by a simple math problem: let’s say a bat and a ball cost $1.10 in total. The bat costs $1 more than the ball. How much does the ball cost?

Most people intuitively answer ten cents because it feels right, even though that’s not the right answer. It’s a simple math problem, but it trips people up because it goes against our gut.

System 2 or head runs on conscious thought. System 2 is at work when you carefully think through a problem or situation. It reminds us to calm down when we feel frightened of terrorist attacks because it knows they’re unlikely to affect us.

But System 2 also has its flaws. First off, it’s slow. Second, it has to be fed by education. We need to utilize our math skills to determine that the ball actually costs five cents.

Risk Key Idea #4: Our gut is guided by two rules that can push us into making illogical decisions.

Our System 1 gut reactions often lead us to make rash decisions because they rely on heuristics. Heuristics are basically cognitive shortcuts that tell us what to do.

The Rule of Typical Things is an example of this. It states that when a question contains information we find typical, our intuition takes over when we formulate an answer.

The Rule of Typical Things can be illustrated by Kahneman’s famous Linda problem. Linda is bright and outspoken. She majored in philosophy, participates in anti-nuclear protests and is passionate about social justice.

Which is more likely: a) that Linda is a bank teller or b) that Linda is a bank teller who is active in the feminist movement?

85 percent of Kahneman’s students answered “b,” even though it’s clearly wrong. It’s more likely that she’s just a bank teller as opposed to a bank teller and a feminist. The “b” option being correct depends on the “a” option being correct, plus it has another condition.

The logic here is simple, but your gut takes over because of the Rule of Typical Things. She studied philosophy, she’s an activist and she cares about social justice, so we assume she’s a feminist.

The Example Rule, also called the availability heuristic, also guides your gut. It states that your gut is highly influenced by the ease with which an example appears.

Consider earthquakes, for instance. The probability of an earthquake occurring is lowest right after one has just occurred. Earthquake predictions are also quite accurate and scientists issue warnings when an earthquake is expected to hit.

Despite these facts, earthquake insurance sales skyrocket after an earthquake occurs, not when scientists issue a warning beforehand. The example of the recent earthquake reminds us of their danger, even though it’s not logical for us to be concerned right after one hits.

Risk Key Idea #5: Our brains intuitively understand anecdotal evidence better than hard data.

“Breast implants cause cancer – I saw it on TV!” Statements or anecdotes like this are more powerful than you might think. Anecdotes are little stories about other people that we use to affirm certain beliefs or feelings.

Powerful anecdotes spread in 1994 when the American media ran several stories about women who had apparently developed connective-tissue diseases because of their silicone breast implants.

The media was flooded with stories of “toxic breasts” and “ticking time bombs,” but there was no real scientific evidence that the implants caused the diseases.

Despite this, the implants manufacturer Dow Corning was faced with a class action lawsuit that same year. In the end, they shelled out $4.25 billion to women with implants. Over half the women who had Dow Corning implants registered for the settlement and the company went bankrupt.

However, a 1994 study found there was no link between implants and connective-tissue disease. Panic had spread, and the company was destroyed, for no logical reason.

Part of the reason we take anecdotal evidence so seriously is that it’s difficult for us to understand numbers and probability. In fact, our innate mathematical skills aren’t much better than a rat’s or a dolphin’s.

Studies have shown that dolphins can tell the difference between two and four, and do simple addition, but they struggle when the numbers get higher than that. Likewise, humans can recognize that nine is larger than two, but are slower to recognize that nine is larger than eight. That’s why it takes so long to memorize basic arithmetic charts in school.

We’re also not great with probability. One study even found that we trust safety equipment more if we’re told it saves 85 percent of 150 lives, rather than 150 lives alone.

Risk Key Idea #6: Companies and government know how to manipulate us with fear.

Our faulty judgments have serious consequences. Unfortunately, the pharmaceutical industry and politicians use this to their advantage.

Pharmaceutical companies regularly use fear to manipulate us. Indeed, disease mongering is an accepted part of the industry. “Disease mongering” means that pharmaceutical companies don’t market pills to people who need them; instead, they try to convince customers that something is wrong with them, and it can be cured with a pill.

Dr. Jerome Kaiser, a professor at Tufts University, says this is why pharmaceutical companies spend so much money on marketing: they earn more by convincing people they’re sick.

The extent of this problem was revealed when GlaxoSmithKline’s confidential plan to sell their product Lotronex in Australia was leaked. Lotronex was supposed to be a cure for irritable bowel syndrome, or IBS.

GlaxoSmithKline’s plan encouraged doctors to think of IBS as vague so that it could be linked to common stomach problems. Nausea and bloating suddenly became symptoms of IBS.

The plan also called for the creation of a panel of “key opinion leaders” that would include people from a medical foundation associated with the plan’s drafters. After all this, Lotronex was found to cause serious – and even fatal – health problems.

Politicians manipulate us in a similar way: the phrase “politics of fear” is so common now it’s become a cliché.

For instance, political ads usually appeal to our emotions rather than presenting us with factual information. A study from the University of Michigan found that 79 percent of campaign ads were based on emotional appeal – and nearly half involved fear.

Consider the Iraq War. The US government drummed up support for it by playing on people’s fears of “weapons of mass destruction,” even though those fears ended up being unfounded.

Risk Key Idea #7: Faulty risk perception affects our understanding of crime.

Franklin D. Roosevelt made a good point when he said “The only thing we have to fear is fear itself.” Just look at the media coverage of crime.

The most newsworthy stories aren’t the ones that are most prevalent in society. The media is obsessed with crime. Crime gets people’s attention; a decrease in crime does not.

Imagine if the government released a report that domestic violence had increased by two-thirds. The media would be all over it!

But what if domestic violence was proven to have decreased by two-thirds? Would the media care? No, it wouldn’t – and we know that for a fact because it happened in 2006.

In 2006, the US Bureau of Statistics published a report on the two-thirds decline in domestic assaults. It went completely unreported by the media.

With all this fear mongering and the power of the Example Rule, it’s no wonder we live in a constant state of fear. We think danger surrounds us because we’re surrounded by stories that cause it.

It doesn’t make sense to fixate on rare crimes. Just take stories about child molestation. In 2007, stories of pedophilia dominated the American media. The US Attorney General, CNN’s Anderson Cooper and countless newspapers told parents to stay alert - predators lurked in the shadows.

Cooper gave an hour-long special on the topic. It was relatively restrained but still wildly exaggerated the threat. The show even went so far as to feature a segment where an expert taught children how to escape from the trunk of a car!

In fact, a study by NISMART, or the National Incidence Studies of Missing, Abducted, Runaway and Throwaway Children found that of 797,000 underage Americans who went missing every year, only 115 were taken in pedophile-related kidnappings. There are 70 million children in the United States: the risk of being kidnapped is one in 608,696, or 0.00016 percent.

The chance of a child drowning in a swimming pool is roughly three times as high. We should be more afraid of swimming pools than pedophiles!

Risk Key Idea #8: Terrorism is one of our biggest fears but it’s far less of a threat than we think.

Since September 11, 2001, the world has been obsessed with terrorism. People fear it now more than ever, despite the fact that terrorist attacks have decreased!

In 2002, Gallup issued the results of a poll measuring the fear of terrorism in the United States. 52 percent of those polled said it was “very” or “somewhat” likely that another terrorist attack would occur within the next several weeks. That was a big drop from five months earlier when the figure was 85 percent.

Naturally, there was a surge in fear of terrorist attacks just after 9/11 because of the Example Rule. People’s guts told them the risk was high, but gradually their heads took over, which is why the figure decreased.

The figure was still at 50 percent four years later, however. And, more astounding than that, when people were asked how worried they were about family members falling victim to attacks, the figure had increased from 35 percent in 2002 to 44 percent in 2006.

If you’re afraid of dying, terrorism really shouldn’t be at the top of your list of worries. Even on 9/11, the chances of an American being killed in the attacks on that particular day were one in 93,000 or 0.00106 percent. The annual risk of being hit by a car is nearly twice as high.

Even if an attack of the same magnitude as 9/11 occurred every day for a year, the chance of dying would be roughly one in 7,750, or 0.0127percent.

For comparison, there are 18,000 unnecessary deaths every year in the United States caused by a lack of health insurance. That’s six 9/11s every year. Terrorism is certainly horrific, but it has little impact on our everyday lives.

Risk Key Idea #9: Fearmongering makes us forget that we’re living in humanity’s most prosperous time.

Despite what we hear, ours are the best days humanity has ever seen.

All over the world, people now live longer than ever before. Countries use life expectancy to measure their wealth and level of development. The more access to health care and other public resources people have, the longer they live.

A 2006 study on global health trends by the World Health Organization found that as we move toward 2030, child mortality will fall and life expectancy will rise in every part of the world.

Robert Fogel, an economic historian and Nobel Laureate, believes that half the college students alive today will live to be 100 years old or more. The figures back him up: in 1950, the average life expectancy in the United States was only 68; by the end of the century, it was 78.

Life in the developing world is improving apace. From the 1980s to the 2000s, the percentage of malnourished people in the developing world dropped from 28 percent to 17 percent.

The United Nations Human Development Index (HDI), which measures income, health and literacy, is probably the best indication of a country’s overall prosperity. Niger is at the bottom of the list of 177 countries.

However, even Niger’s 2003 HDI score was 17 percent higher than it’s 1975 score. This trend is true throughout the developing world: Chad improved by 22 percent and Mali improved by 31 percent within the same time period.

So although our brains may be hardwired to misinterpret risk, and although others have tapped into this for their own gain, we need to remember that we’re living in the healthiest and wealthiest period of human history.

In Review: Risk Book Summary

The key message in this book:

We often perceive threats and risks inaccurately. Our brains have been programmed to respond to potential dangers impulsively and to understand the world around us by simplifying it. That’s why anecdotes have a bigger impact on some people than statistics. But even though governments, industries and media outlets exploit this, we’re living in humanity’s most prosperous era yet.

Actionable advice:

Don’t listen to insurance agents who hype natural disasters.

They’re playing to your fears. Read up on the scientific evidence instead – it’s much more reliable.